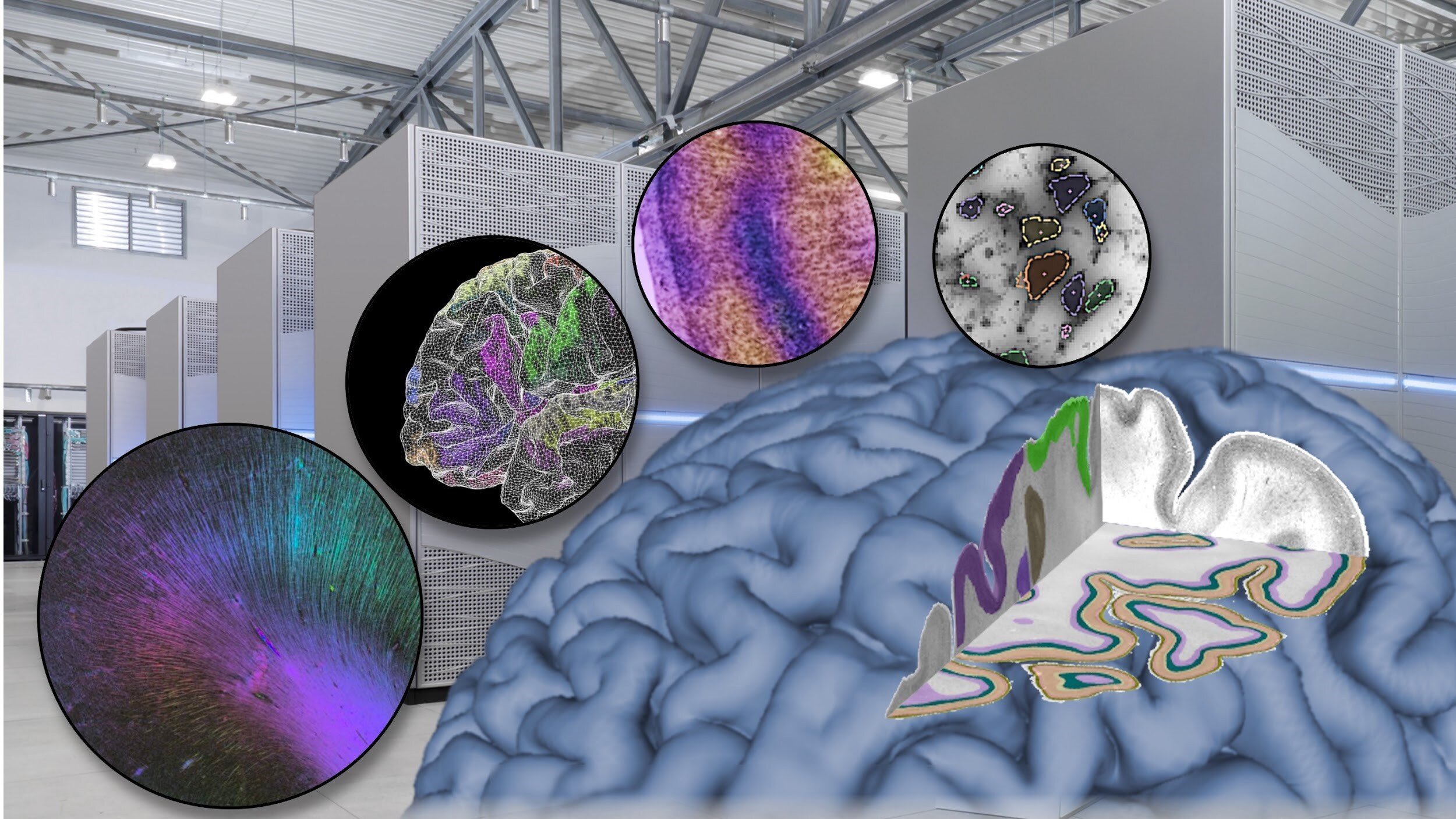

Brain Researchers Outline the Need for Exascale Computing.

Katrin Amunts, a brain scientist, and Thomas Lippert, a supercomputing expert, explain in the newest Science issue how developments in neuroscience necessitate high-performance computing technology and, eventually, exascale computing capacity.

“Understanding the brain in all its complexity requires insights from multiple scales—from genomics, cells and synapses to the whole-organ level. This means working with large amounts of data, and supercomputing is becoming an indispensable tool to tackle the brain,” says Katrin Amunts, Scientific Director of the Human Brain Project (HBP), Director of the C. and O. Vogt-Institute of Brain Research, Universitätsklinikum Düsseldorf and Director of the Institute of Neuroscience and Medicine (INM-1) at Research Centre Jülich.

“It’s an exciting time in supercomputing,” says Thomas Lippert, Director of the Jülich Supercomputing Centre and leader of supercomputing in the Human Brain Project. “We get a lot of new requests from researchers of the neuroscience community that need powerful computing to tackle the brain’s complexity. In response, we are developing new tools tailored to investigating the brain.”

There are 86 billion neurons in the mature human brain. An important area of research is zooming in on its cellular and subcellular dimensions to uncover diverse elements of neural connection. It is, however, extremely difficult to connect the many spatial scales from the synapse level (at the nanometer level), through single neurons and glial cells (at the micrometer level), to the entire organ.

Scanning time, storage technology, and data processing challenges do not begin with human brain studies, but they are major factors when studying vertebrates and even invertebrates’ brains. For example, reconstructing the synaptic connections of a 100,000-neuron adult fruit fly brain resulted in 21 million camera pictures and a 106-terabyte dataset.

A portion of the human cerebral cortex with a volume of 1 mm3 was recently reconstructed in three dimensions, amounting to 1.4 petabytes of data. Despite the fact that 1 mm3 of cerebral cortex equals 0.00007 percent of total brain volume, a high-speed multibeam electron microscope with a scan period of 326 days was required to obtain these data. Insights into the detailed architecture of the brain may give new knowledge of cortical networks and provide new quantitative data that define tissue qualities, with implications for brain activity, according to this research.

Because there are significant differences between human and other species’ brains, particularly in terms of connectivity, this type of examination of human brain tissue is a vital supplement to studies of other species’ brains. The volume of white matter in the cerebral cortex, which contains axons that support long-range connection, for example, increases faster than gray matter during mammalian evolution (which contains neuronal cell bodies)

In human brains, however, investigating the complete extent of axons and their synapses, which can be several centimeters from their cell bodies, is a considerably more difficult task than in rodent or invertebrate brains.

Read the complete article on Science.org.

The analysis of axons at the level of 60-µm isotropic resolution for a whole human brain using 3D-PLI (11) would require 8.3 terabytes of storage and several days on existing supercomputers to optimize 2 × 1010 spins. Such large datasets also create substantial challenges for the visualization of data. Paraview, for example, based on the open-source software VTK (www.paraview.org), can use parallel graphics processing units (GPUs) and has been applied to render and visualize 3D-PLI data. Going further and optimizing fiber orientations obtained with 3D-PLI at 1.3-µm in-plane resolution (i.e., single axons) with 1013 spins would result in a storage demand of 3.2 petabytes and years of computation. This is not possible with current petascale technology but can be accomplished with future exascale computing power, that is, computers capable of executing 1018 floating-point operations per second (i.e., 1 exaflop). Handling such large datasets, however, creates massive computational demands at the level of input-output. More efficient input-output procedures and algorithms are emerging, which should help, but the computational challenges are still extraordinarily high.

The size of data is exponentially growing according to the resolution of studies on the human brain connectome and organization. The two largest bars are estimated sizes because such data do not exist for the human brain.

GRAPHIC: K. FRANKLIN/SCIENCE

Brain Researchers Outline the Need for Exascale Computing:

Exascale computing is a computing system that can perform at least 1018 floating-point operations per second (1 exaFLOPS). Although no single machine has yet achieved this aim as of January 2021, systems are being developed to do so. Folding@home, a distributed computing network, achieved one exaFLOPS of computing capacity in April 2020.

The Human Brain Project’s research infrastructure EBRAINS tackles the big data challenge of neuroscience in Europe. It provides brain researchers with a variety of tools, data, and compute resources. This includes access to supercomputing equipment through the Fenix Shared Infrastructure, which was created as part of the Human Brain Project by Europe’s major supercomputing centers and will benefit communities outside brain research.

Within the next five years, Europe is aiming to deploy its first two exascale supercomputers. They will be acquired by the European High-Performance Computing Joint Undertaking (EuroHPC JU), a joint initiative between the EU, European countries, and private partners.